When processing with data, we need a language or engine that can consume all the threads in your PC to work on that data.

Now coming to Big data, we have this issue in hand and Apache Spark comes with its cape.

Apache Spark is a data processing engine. It's services can be used via an API and works best with Scala. It is developed specifically to work on Big data and ML.

When processing with data, we need a language or engine that can consume all the threads in your PC to work on that data.

Now coming to Big data, we have this issue in hand and Apache Spark comes with its cape.

Apache Spark is a data processing engine. It's services can be used via an API and works best with Scala. It is developed specifically to work on Big data and ML.

It went open source in 2010 under BSD lisence and later taken into Apache Software Foundation (biggest open source foundation) in 2013

Apache spark has advance DAG (that graphs you learned during placements- here it is used) execution engine and in memory computing. Spark processes and retains data in memory for subsequent steps, whereas MapReduce processes data on disk. As a result, for smaller workloads, Spark's data processing speeds are up to 100x faster than MapReduce, its reason why spark is preferred over hadoops mapreduce. Thus it ended the era of Hadoop controlling big data world since its launch in 2014.

Hadoop vs Spark

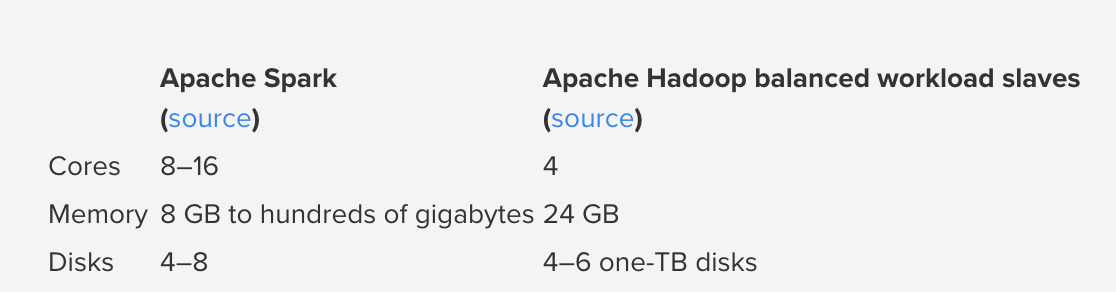

Even though Spark came out to actually beat the mapReduce of hadoop but it depends on the use cases. Spark processes data in RAM, while Hadoop mapReduce persists data on disk after each step. But the Spark needs a lot of memory, So if the data is too big to fit entirely into memory, then Spark could suffer major performance degradations. But Spark has great caching as a result iterative computations on the same data are faster in Spark. Also, Spark provides lot more than just reading and writing. Hadoop just a storage system(HDFS) plus MapReduce

Costing

The memory in the Spark cluster should be at least as large as the amount of data you need to process because the data has to fit in memory for optimal performance. If you need to process extremely large quantities of data, Hadoop will definitely be the cheaper option, since hard disk space is much less expensive than memory space.

The memory in the Spark cluster should be at least as large as the amount of data you need to process because the data has to fit in memory for optimal performance. If you need to process extremely large quantities of data, Hadoop will definitely be the cheaper option, since hard disk space is much less expensive than memory space.

Does my data need to fit in memory to use Spark?

After reading above part, you'd be thinking big data works on so much data so will spark able to work on 1TB of data? This is answered at apaches official website:- No. Spark's operators spill data to disk if it does not fit in memory, allowing it to run well on any sized data. Likewise, cached datasets that do not fit in memory are either spilled to disk or recomputed on the fly when needed, as determined by the RDD's.

Meaning, consider you have a resource with 64GB memory and you are processing data of 1TB, So now you need to make sure that your data partitions are lesser than 64GB in size. Even if it does, it will spill that data to HDD, which will work but will take more time.